For years, the AI industry optimized for benchmark performance. Almost nobody measured what actually happened in production.

Enterprises rapidly scaled AI infrastructure across clouds, GPUs, accelerators, and inference platforms — yet many still cannot independently verify what that infrastructure is actually delivering once deployed at scale.

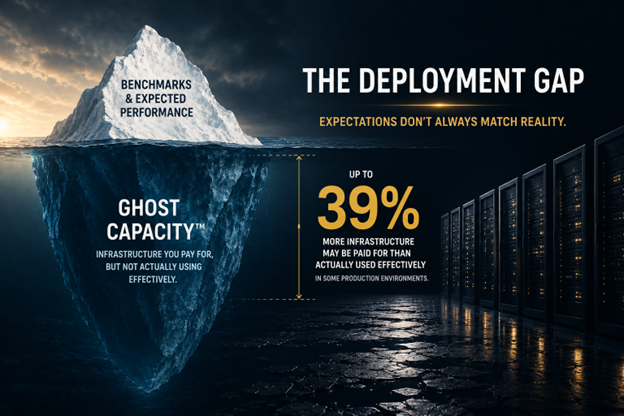

That is the AI Deployment Gap.

Today, RunIQ Labs officially emerges from stealth to help close it.

We have spent decades inside the AI silicon and infrastructure stack — from Qualcomm and AMD to hands-on deployment benchmarking across NVIDIA, AMD, and AWS environments.

What that experience taught us more than anything else is this:

The people selling you AI infrastructure are not positioned to independently measure whether your deployment is actually operating efficiently in production.

Vendor tools are optimized to expose the capabilities of their own layer. Cloud dashboards stop at the abstraction boundary. Benchmarks measure short-duration potential under controlled conditions. Internal teams inherit metrics that were never designed to reflect sustained production reality.

No single party in the AI stack owns cross-layer deployment efficiency.

And no single party has the incentive to independently verify it.

That gap is not simply a technology problem.

It is an ownership problem.

What We Kept Seeing in Production

We have watched enterprises commit tens of millions of dollars to AI infrastructure based on benchmark expectations that changed dramatically once workloads entered sustained production operation.

We have watched deployment configuration choices — not hardware, not models, not precision — create throughput swings of more than 22 percent across identical infrastructure environments.

We have watched short-duration benchmark conditions inflate efficiency metrics by up to 39 percent versus sustained production execution.

And we have watched organizations provision, power, and pay for compute that delivered no economically useful work — with no independent way to measure where the loss originated.

We call this Ghost Capacity™.

“Ghost Capacity™ is AI infrastructure that is provisioned, powered, and paid for, but not delivering economically useful work.”

Ghost Capacity™ does not announce itself.

It compounds silently — billing cycle after billing cycle — inside organizations that have never independently measured what their AI infrastructure is actually delivering.

RunIQ Labs was built to FIND it.

To RECOVER it.

To DEFEND it.

The Measurement Layer That Was Missing

RunIQ Labs is the independent measurement layer enterprise AI infrastructure was missing.

We built the company on a simple premise:

“The referee cannot be owned by one of the teams.”

Through our Deployment Diagnostic Records (DDR) and RunIQScore™ framework, RunIQ Labs independently measures production AI deployments across heterogeneous infrastructure environments.

We analyze:

- Performance

- Power

- Cost

- Throughput efficiency

- Infrastructure utilization

- Deployment variance

- Ghost Capacity™ recovery opportunity

Every engagement produces deployment-grade evidence showing:

- What your infrastructure is actually delivering

- What it should be delivering

- Where efficiency loss is occurring

- What the gap is costing you economically

We do not replace vendor tooling.

We provide what vendor tooling structurally cannot: an independent, cross-layer view of production reality.

This is not synthetic benchmarking.

This is not vendor marketing.

This is independent AI deployment intelligence.

Why This Matters Now

AI infrastructure is no longer an R&D experiment. It is becoming one of the largest infrastructure investment cycles in modern enterprise history.

CIOs and CFOs are now being asked to justify massive AI infrastructure commitments without an independent way to verify sustained production efficiency.

At the same time, the underlying infrastructure ecosystem has fragmented dramatically.

Jon Peddie Research has documented the rapid expansion of AI processor suppliers, architectures, and deployment categories across the global market — creating increasingly complex deployment environments spanning GPUs, accelerators, inference processors, cloud platforms, orchestration layers, and runtime stacks.

Every infrastructure category introduces its own telemetry systems, abstraction boundaries, optimization assumptions, and deployment behavior.

The result is a growing measurement gap between what AI infrastructure is expected to deliver and what it actually delivers once deployed at scale.

As Jon Peddie recently stated:

“RunIQ is doing the work the market structure requires.”

That is the environment RunIQ Labs was built for.

Launching Out of Stealth

Today, RunIQ Labs officially emerges from stealth.

Alongside today’s launch, we are releasing:

- Our launch whitepaper: “What Really Happens When You Run AI at Scale — How Ghost Capacity™ Impacts the Bottom Line”

- Our official launch press release

- A one-page Media Brief: The Reality Behind AI Infrastructure Is Finally Visible

- The introduction of the RunIQ Labs deployment intelligence platform

The whitepaper includes real deployment measurement data across NVIDIA H100, A100, AMD MI300X, and AWS Inferentia environments — examining the growing gap between benchmark expectations and sustained production execution.

Some of the findings surprised even us. Particularly around deployment variance, power-constrained efficiency behavior, and the widening disconnect between theoretical AI performance and real-world economic output.

The AI industry does not need more dashboards.

It needs independent operational truth.

FIND. RECOVER. DEFEND.