Deploy AI Models Efficiently Across Any Compute Platform

We measure real workloads on real infrastructure to tell you exactly where your AI runs best — for performance, power, and cost.

AI Performance Claims Don't Match Deployment Reality

Benchmark results rarely match production behavior. Vendor-defined metrics obscure power, cost, and sustained throughput. VM-based or synthetic benchmarks hide real infrastructure effects — and no neutral standard for comparing AI deployments has existed. Until now.

Unrealistic simulations in VM layers that never reflect production conditions

Production hardware, real workloads, real deployment conditions

Power and cost metrics obscured by vendor-defined benchmarks

Performance, power, and cost measured transparently on every run

Lab conditions that measure best-case, not sustained performance

Continuous measurement under real serving conditions, not snapshots

Vendor-locked ecosystems with opaque, non-comparable results

Independent, reproducible results across every accelerator platform

Deployment-Grade AI Benchmarking and Intelligence

Real infrastructure. Real workloads. Vendor-neutral. We profile, benchmark, optimize, and certify AI deployments on production hardware — not synthetic VMs.

AI Model Profiling

Compute, memory, latency, and power profiling across real infrastructure — cloud, on-prem, and hybrid. The foundation everything else builds on.

Production Benchmarking

Sustained performance measurement using industry and workload-specific benchmarks across accelerator platforms.

Optimization & Porting

Hardware-aware optimization focused on performance-per-watt and cost-per-inference, with platform porting across heterogeneous accelerators.

Certification & Deployment

Production-grade validation and deployment readiness certification — verified by RunIQScore.

Deployment-First by Design

RunIQ measures what customers actually experience. Every benchmark runs on real hardware, under production conditions, with full Performance-Power-Cost visibility. No synthetic VMs. No peak-only metrics. No vendor lock-in.

Typical Benchmarks

- Synthetic / VM-Based

- Peak / Lab Conditions

- Isolated Metrics

- Opaque Results

- Vendor-Locked Ecosystems

RunIQ Labs AI

- Real Infrastructure

- Sustained Workloads

- PPC Unified Scoring

- Reproducible & Auditable

- Vendor-Neutral & Open

Deployment Intelligence,

Quantified

A vendor-neutral, deployment-grade scoring system that quantifies how AI models perform in real production environments — across performance, power, and cost.

Performance

Sustained throughput, latency, and stability measured under real serving conditions.

Power

Energy consumption and efficiency measured during actual workload execution — tokens per watt, joules per token, watts under load.

Cost

True infrastructure and runtime cost required to deliver production inference.

Built for Real Infrastructure

Vendor-neutral deployment intelligence across every major accelerator ecosystem. If it doesn't run in production, it doesn't count.

H100, A100, L4, RTX — data center through edge

MI300X, RX — ROCm inference across generations

Graviton, Inferentia2 — custom silicon at cloud scale

Expanding Platforms

Intel Gaudi, Google TPU, NPU — extending coverage to emerging accelerator architectures

- ✓ Prior-generation hardware lifecycle extension

- ✓ Hybrid & multi-platform deployments

- ✓ Vendor-neutral scoring across all silicon

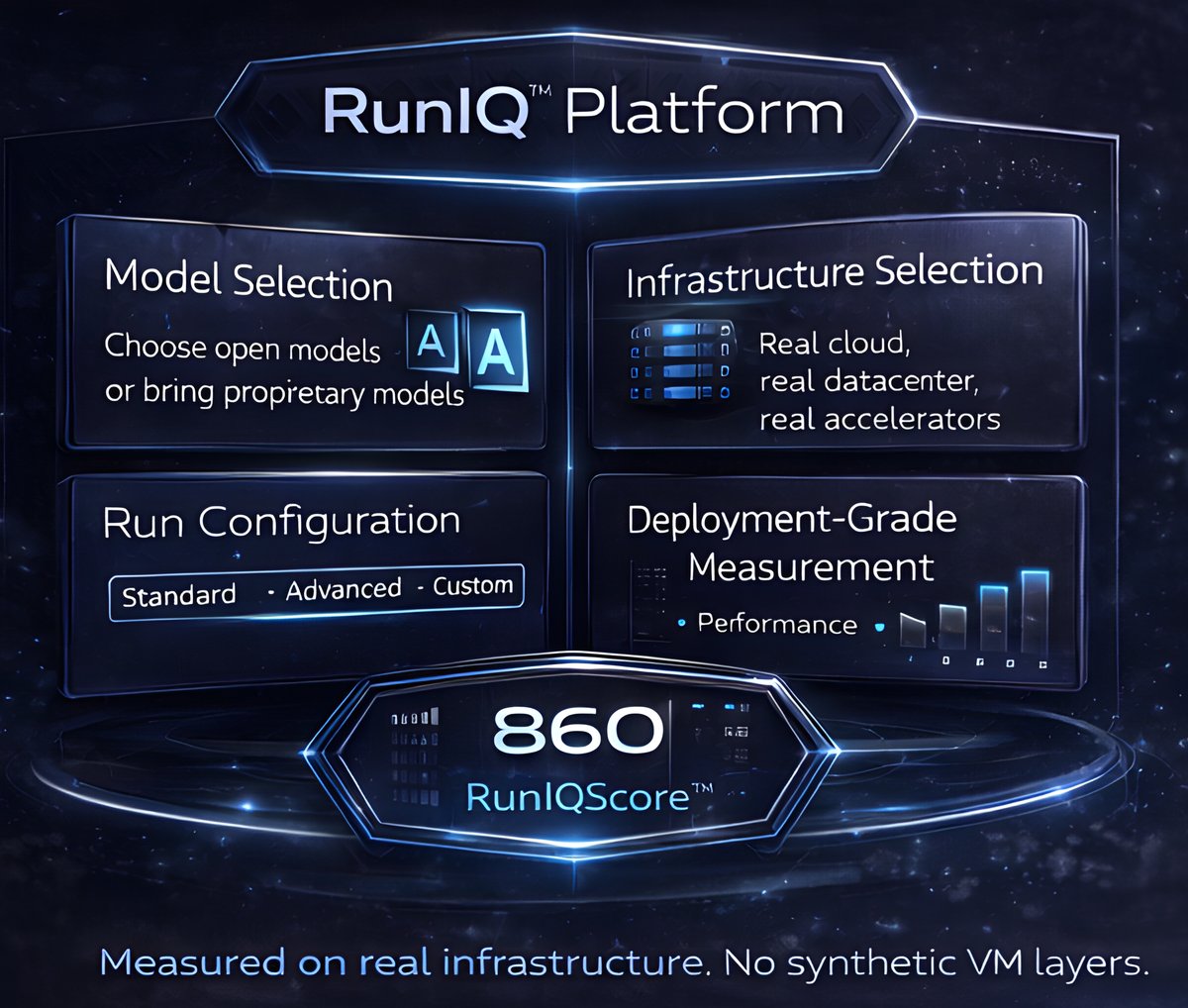

Platform + Services, Designed for Real Deployment

Services revenue first. Automated platform next. Building the deployment intelligence standard while generating revenue and deep datasets from day one.

Services-Led by Design

The RunIQ Labs team executes all model runs on real production infrastructure — no synthetic VM layers. Customer-defined configurations with full Performance-Power-Cost visibility.

- ✓ Benchmarking & Profiling — Real workloads, real environments

- ✓ Optimization Services — Compiler, runtime, configuration tuning

- ✓ Deployment Readiness & Certification — Production-grade validation

Automated Platform

Customer access to RunIQ configuration and submission interface. SLA-based execution with transparent progress tracking. Continuous benchmarking and PPC monitoring with real-time RunIQScore updates.

- ✓ Self-Serve Submission — Automated model submission and execution

- ✓ Dashboard & Continuous Monitoring — Real-time PPC tracking

- ✓ Enterprise Integrations — Partner enablement at scale

Why It Matters

The forces driving deployment intelligence are accelerating. Infrastructure costs are exploding, power is the constraint, and enterprises are demanding vendor-neutral answers. A scoring standard will emerge — the question is who defines it.

AI Costs Are Exploding

Enterprise AI infrastructure spend is accelerating with no standardized way to measure return on deployment investment.

Power Is the Prime Constraint

Energy-driven TCO is reshaping every infrastructure decision. Performance-per-watt is no longer optional — it's the metric that matters.

Customers Demand Neutrality

Enterprises need deployment data they can trust — independent of the vendors selling them hardware.

A Scoring Standard Will Emerge

The industry will converge on a deployment scoring standard. RunIQ Labs AI is defining the deployment truth layer.

Tell Us About Your Models, Platforms, and Goals

A senior deployment expert will personally review your submission and respond with a scoped assessment.